-3.png)

Sovereignty… yes, I know, I just dropped one of the most overloaded buzzwords of the year. But bear with me.

All jokes aside, the geopolitical context has changed: Overnight, things we assumed were stable suddenly feel conditional. What happens if tariffs impact our cloud bill? What if access to certain AI models changes? Are we truly in control of our data, or are we only comfortable because nothing has gone wrong yet? These aren’t theoretical questions anymore (i.e., especially in Europe); they are becoming board-level conversations.

That’s why I prefer the term "sovereign platforms," not just "sovereign AI." This is not only about AI-specific architectures and challenges. It’s about the broader foundation on which we build and run our digital capabilities. And that’s where the paradox kicks in.

Cloud and AI platforms promise speed, scalability, and innovation. And they absolutely deliver. But the same platforms can also introduce structural dependency, security risks, larger attack surfaces, rising cognitive load, and long-term strategic exposure. Convenience today can quietly become an operational challenge tomorrow.

The discussion in European boardrooms is shifting: The question is no longer “Should we adopt cloud or AI?” That decision has largely been made. The real question is: Who ultimately controls the platform and the data our organisation depends on?

In this article, I’ll explore how we can handle these challenges. But before jumping to solutions, we need to align on a few key concepts: cognitive load, sovereignty, and platform engineering.

That will also shape the flow of this article: first, we’ll clarify those core ideas. Then we’ll zoom in on what they actually mean in practice, specifically in the context of sovereign (AI) platforms. (And along the way, to make things easier to digest, we’ll add some references to going to the pub.)

Key concepts

Today’s digital landscape is no longer a simple stack of applications and infrastructure. It has become a layered ecosystem of tools, APIs, pipelines, security controls, compliance frameworks, financial governance, and increasingly AI and MLOps capabilities. What used to be “just development” now spans DevSecOps, FinOps, and MLOps, and the responsibilities keep expanding.

This results in increased cognitive load: cognitive load is the mental effort required to understand, navigate, and operate within a system, an effort that, when excessive, limits focus, productivity, and innovation.

Highly skilled (software and AI) engineering teams spend a growing portion of their time navigating infrastructure abstractions, policy constraints, integration patterns, and platform dependencies and fragmentation instead of building capabilities and features that truly differentiate the business. For leadership, the impact surfaces elsewhere: slower time to market, rising operational exposure, and a total cost of ownership that is harder to control and justify.

Complexity is not merely a technical side effect of scale; it acts as a structural tax on innovation, and a rather expensive one.

Reducing cognitive load, therefore, is not about making life easier for developers. It is about restoring organisational execution capacity and regaining focus. When teams can concentrate on their core domain instead of platform friction, innovation accelerates, risk decreases, and strategic initiatives move from PowerPoint back into production.

My own interpretation is straightforward: cognitive load increases when you have too many components to monitor, integrate, or maintain, and when responsibilities start extending beyond your core focus.

Take a simple example. When I order a cocktail at a bar, I just ask for a mojito. My core responsibility is enjoying the drink. I do not want to know the recipe by heart, including the ingredients, the proportions, and the preparation steps. That is the barkeeper’s job.

In other words, I want my cognitive load to remain manageable. I want to focus on what I am there for, not on the operational details behind the scenes.

Source: ChatGPT 5.2, prompted by Maarten Vandeperre

Source: ChatGPT 5.2, prompted by Maarten Vandeperre

Well, where do we start?

We could easily write an entire book about sovereignty, but let’s keep it pragmatic for the purpose of this article. For many organisations, sovereignty is still almost synonymous with keeping everything on premises. Given the current geopolitical context, that reaction is understandable. Regulations such as the US Cloud Act allow US authorities to request data from American hyperscalers about their customers, regardless of where the servers are physically located, and in certain cases, providers are not allowed to inform the affected customer. Even discussions within the French Senate have highlighted that hyperscalers cannot fully guarantee protection from US jurisdictional reach.

However, abandoning public cloud altogether cannot be the objective either. Public cloud delivers undeniable benefits in scalability, innovation speed, and access to advanced capabilities, especially in AI. Ignoring that would mean ignoring reality. That is precisely why, at Red Hat, we strongly advocate an open hybrid cloud approach: keeping your options open while maintaining control. We will come back to that shortly.

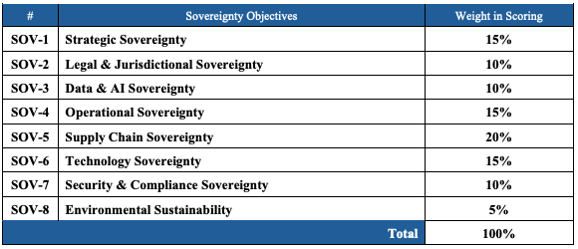

Sovereignty, therefore, is not simply about running on premises. It goes much further. The European Commission frames sovereignty across multiple dimensions, including data, AI, technology, and supply chain sovereignty. It is a broader strategic construct, not a hosting decision. Ultimately, sovereignty is about business continuity and risk management: ensuring that critical operations remain viable even when geopolitical, regulatory, or vendor conditions change.

My own interpretation is straightforward: be as independent as reasonably possible from single vendors and proprietary technologies, and remain in control of your data. Know where your data resides. Understand who can access it. And ensure that these are conscious choices, not accidental consequences of architectural decisions.

If we go back to the bar analogy, sovereignty simply means you are not obliged to follow the crowd. If you want to go to another bar, you are free to do so. If you prefer wine over beer, that is your choice. If you would like to order a Heineken… well, sorry, I am Belgian, we will not take it that far. For the Heineken lovers among us, it is just a joke. Right?

Being sovereign is about keeping your options open. You can fall in love with a technology, a vendor, or an AI model, but do not marry them. And no, this time we will not stretch the pub analogy any further.

And if you would like to validate your sovereignty readiness, you can make use of the sovereignty readiness assessment tool.

Source: ChatGPT 5.2, prompted by Maarten Vandeperre

When we talk about sovereignty, the conversation often focuses on geography: where does the data reside, in which country are the servers located, under which jurisdiction does the provider fall? Those questions matter. But, as mentioned above, sovereignty is also about technological control, and that is where open source plays a decisive role.

Open source reduces structural dependency. It gives organisations visibility into how systems work, how they are secured, and how they evolve. There is no opaque API, no hidden roadmap, no black box that only one vendor can interpret. That transparency creates leverage. You are not dependent on a single supplier to maintain, adapt or extend critical components. You can choose partners, build internal expertise, or combine ecosystems. That optionality is sovereignty in practice.

It is important to be realistic. Open source does not automatically mean sovereign. Governance, operational discipline, and lifecycle management still matter. But open foundations make portability and interoperability structurally possible. They keep architectures adaptable as regulations, cost structures or geopolitical realities shift. In that sense, open source is not just a development philosophy. It is a strategic design choice that keeps your options open and your organisation in control.

And… that’s what we can offer with the Red Hat stack: Due to the 100% open source nature, you are always assured of business continuity. Enterprise-grade open source technologies such as OpenShift are built on fully open source components, but are delivered with integrated security, certified ecosystems, long-term support, and consistent lifecycle management. The openness ensures that workloads are not trapped in a closed ecosystem. The enterprise layer ensures operational stability and accountability.

That combination matters. Because the code is open, there is no single-vendor technical lock on your workloads. You are not dependent on proprietary runtimes or hidden extensions to keep your systems running. And because the platform is supported and maintained with enterprise rigour, you gain business continuity, predictable updates, and a clear support model.

Sovereignty is not about rejecting vendors. It is about avoiding structural dependency. An open, enterprise-grade stack provides both innovation and continuity, allowing organisations to move, adapt, and scale without losing control.

Like cognitive load and sovereignty, we could easily write an entire book about platform engineering. According to platformengineering.org, it can be summarised as:

“Platform engineering is the discipline of designing and building toolchains and workflows that enable self-service capabilities for software engineering organisations in the cloud-native era. Platform engineers provide an integrated product most often referred to as an “Internal Developer Platform” covering the operational necessities of the entire lifecycle of an application.“

In essence, platform engineering enables abstraction. It transforms infrastructure, security, compliance, and operational complexity into curated, centralised, and reusable services that teams can consume on demand. Instead of every team solving the same problems repeatedly, the organisation provides standardised capabilities through self-service interfaces. This reduces fragmentation, embeds governance by design, and shifts effort away from coordination toward value creation.

Consider the difference between a traditional setup and a platform-driven model when requesting a database. Without a platform, multiple teams are involved, tickets circulate, specifications are debated, and responsibility becomes fragmented. Lead times increase, and risk accumulates. In a platform model, the request becomes a structured self-service action. A developer selects a predefined configuration, aligned with policy and best practices, and the provisioning happens automatically, securely and consistently. Governance is not an afterthought. It is built in. The result is shorter time to market, predictable risk exposure, and lower total operational friction.

As a developer, your cognitive load reduces a lot through platform engineering as well: In a platform context, the experience is entirely different. You go to an internal portal, request a database via a form, specify the database name, the technology, and a t-shirt size. The t-shirt size is linked to clear examples and usage patterns. Behind the scenes, that simple request is translated into infrastructure parameters, and the provisioning happens automatically in a standardised and governed way. No friction between teams, fewer security gaps, no ticket flood, and significantly shorter lead times. Efficiency translates directly into faster time to market.

If we bring this back to the pub analogy, imagine ordering your drink via a QR code at your table. You select a cocktail in the app. If it is a complex one, the app translates your selection into the full recipe for the bartender. You do not need to walk to the bar. You do not need to understand the bartender’s dialect. You simply order through a clear interface. Even the bartender benefits, as he receives structured instructions instead of relying on memory or interpretation. Cognitive load decreases for everyone involved.

And that is what we see in practice. Platforms are adopted not only by developers but also by AI engineers, data scientists, and operations teams. Multiple personas benefit from the same structured foundation.

A final piece of the story is transparency and trust. A platform should allow you to know what is inside, avoid uncontrolled sprawl, and prevent surprises. This is where trusted software supply chains come into play. By embedding traceability, security controls, and compliance into the platform itself, you ensure that speed does not come at the cost of risk. In that sense, platform engineering is not a technical optimisation. It is an organisational strategy to scale innovation without scaling complexity.

Source: ChatGPT 5.2, prompted by Maarten Vandeperre

Sovereignty is often treated as a legal or geopolitical discussion, but in practice, it becomes real only when it is engineered into the platform. It is not a policy slide in a board deck. It is a design choice. A sovereign posture means you deliberately avoid single points of dependency, you maintain visibility into your data and workloads, and you preserve the ability to move, adapt, or replace components when circumstances change. That flexibility does not happen by accident. It must be built into the platform architecture from day one.

Think about our pub again. Being sovereign does not mean you brew your own beer at home and refuse to enter any bar. It means you are free to choose where you go. You can switch pubs without losing your favourite bartender. You can order from a broad menu, not just what one supplier dictates. If one pub suddenly changes the rules or raises the prices dramatically, you are not stuck. The freedom to move is what creates leverage, and leverage creates control.

In platform terms, sovereignty is a capability delivered through portability, open standards, transparent supply chains, and abstraction layers that decouple applications from underlying infrastructure. When your platform allows workloads to run across environments, when your data location and access are transparent, and when vendor-specific services are not deeply hardcoded into your architecture, sovereignty becomes operational rather than theoretical. It stops being ideology and becomes a practical risk management instrument embedded in how you build and run systems.

So far, we have established that sovereignty matters, particularly in the current geopolitical climate. Yet despite the urgency, relatively few organisations are building truly sovereign platforms. The reasons are fairly pragmatic. In an early, unscaled phase, consuming SaaS services such as OpenAI can appear cheaper and faster. But many of these providers are still operating at significant losses, and sooner or later someone pays the bill. Pricing models change, dependencies deepen, and exit costs become visible only when it is already uncomfortable. And once usage scales, the perception of “cheap” often disappears quickly.

The second reason is more structural. Organisations simply do not know where to begin. Data is scattered, architectures are inconsistent, ownership is unclear, or we simply don’t know what to do with our confidential data. The cognitive load is overwhelming. Leaders may agree that sovereignty is desirable, but the path toward it feels complex and risky. When complexity is high, inertia wins.

This is where platform engineering becomes the enabler. If sovereignty feels heavy because the cognitive load is too high, the solution is not to abandon the ambition, but to reduce the load. Platform teams introduce standardisation, abstraction, and self-service capabilities that make sovereign choices operationally feasible. They allow teams to focus on domain value instead of infrastructure mechanics.

Back to the pub. At first, ordering a drink from a flashy pop-up bar seems cheap and convenient. No questions asked, quick service, low entry cost. But once you host a large event there every week, prices increase, rules change, and you realise you never learned how the drinks were actually made. Building your own well-organised bar may require effort upfront, but with the right setup and clear recipes, you regain control. And if the menu becomes too complex, you simplify it through a structured ordering system so that both guests and bartenders can focus on what they are there for.

How do you build a sovereign AI platform? Again, we could write an entire book about it. Let me highlight a few essential building blocks that make sovereignty tangible rather than theoretical.

A sovereign AI platform should not be tied to a single environment. Workloads must be able to run where it makes the most sense: on premises for sensitive data, in a private cloud for regulatory reasons, or in a public cloud when elasticity or specific services are required. An open hybrid cloud approach keeps infrastructure choices flexible and portable. It prevents deep coupling to proprietary services and ensures that moving workloads does not require a full rewrite. In practice, this means designing AI capabilities so they are consistent across environments, giving you the freedom to adapt when costs, regulations, or strategic priorities change.

Sovereignty also means freedom of choice at the model layer. AI should not be a black box consumed through a single API without visibility or alternatives. Organisations need the ability to evaluate, switch, fine-tune, and host different models depending on performance, cost, compliance, or domain specificity. This includes supporting both open and commercial models, running them where appropriate, and avoiding tight integration with one vendor’s ecosystem. Model flexibility reduces lock-in and ensures that AI strategy remains a business decision rather than a technical constraint.

Open source plays a critical role in keeping control. It provides transparency into how components work, how they are secured, and how they evolve. It reduces dependency on proprietary interfaces and opaque roadmaps. Open technologies encourage interoperability and portability, which are foundational for sovereignty. More importantly, they allow organisations to inspect, adapt, and extend capabilities rather than being limited to predefined features. This transparency is essential when AI becomes part of mission-critical processes.

Finally, sovereignty requires built-in governance. Guardrails around data access, model usage, explainability, traceability, and software supply chains must be embedded in the platform itself. AI should not operate as an isolated experimentation layer detached from compliance and risk management. Policies, auditing, lineage tracking, and secure supply chains ensure that innovation does not compromise control. Governance is not about slowing teams down. When implemented at the platform level, it actually accelerates adoption because teams operate within clear, trusted boundaries.

Building a sovereign AI platform is not simply about running models somewhere. It is about establishing a flexible, transparent, and governed foundation that preserves freedom of choice while keeping the organisation firmly in control.

I would like to close with a remark from a participant at a recent AI conference. He said, “By default, we go to the cloud for AI development. But sometimes we realise we are missing control, especially around the model lifecycle.” That observation captures the tension many organisations experience. Cloud offers convenience and speed, yet visibility and operational control can become secondary.

What resonated during the demo of OpenShift, OpenShift AI, and Developer Hub (i.e., it was a Red Hat-oriented talk) was that you can achieve a cloud-like developer experience across environments, while still retaining insight into what runs under the hood. Workloads can move. Models can be governed. Lifecycle management remains transparent. And control can stay where it belongs, whether with SRE teams, operations, or centralised platform teams. That balance between experience and control is what ultimately makes sovereignty practical rather than theoretical.

And yes, we could go much deeper. If you would like to explore how such an approach can be implemented in practice, let’s continue the conversation. Preferably over a drink.

So reduce your cognitive load. Stay sovereign. Apply platform engineering principles within your organisation, and enjoy your drinks

These Stories on CIONET Belgium